4 Lessons Our Cats Taught Us About Training Evaluation

We are cat lovers at Kirkpatrick Partners. Here are the simple, yet valuable, lessons our cats remind us of daily.

Lesson #1: Start With the End in Mind

Starting with the end in mind is critical to any and every training program. Attila has no problem defining his top of the mountain. When he got a new scratching post, instead of simply standing on the ground to groom his claws, he climbed to the top of it and started his exploration from there.

Take a page out of Attila’s book — the next time you receive a training request, climb to the top of the mountain and discuss how it relates to the highest-level goals of your organization. Get out of the training mindset and ask, what are the highest organizational goals this program could positively impact?

Lesson #2: Accountability After Training is Everyone’s Job

Attila thought he could get away with jumping on the table, but his sister, Dena, caught him in the act. Dena held Attila accountable and chased him down, but I guess not before we caught him and snapped a photo.

Level 3 required drivers do not always require additional resources, other than a culture that co-workers help each other to succeed, and call them out when they see the wrong things occurring. While this technique would work well in any culture, it is a particularly good idea for organizations struggling to get post-training support from managers and supervisors.

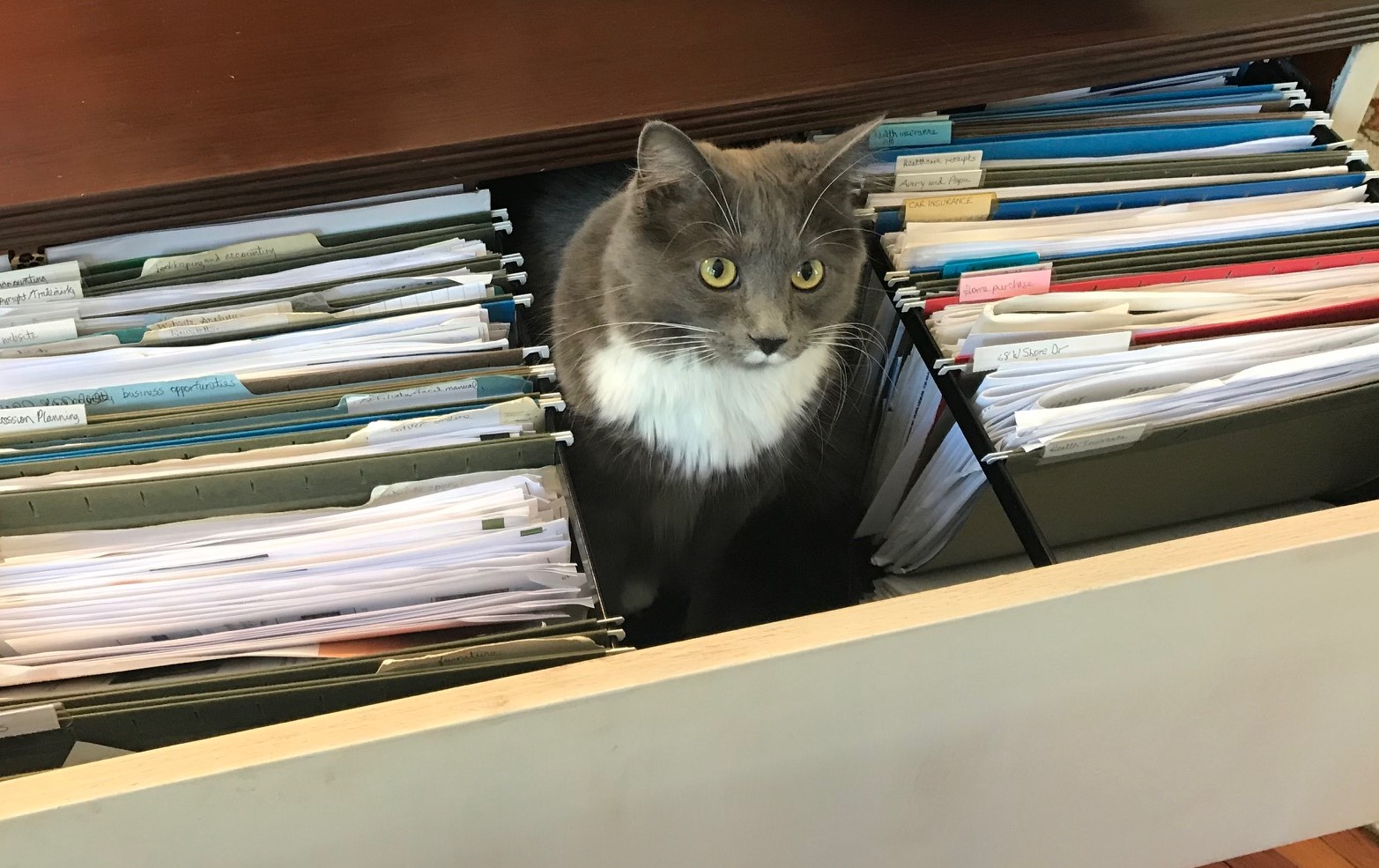

Lesson #3: Don’t Ignore Incoming Data

At the end of many programs, an evaluation form is provided so participants can give their input. The facilitator skims through them to see how they did and then files them in a corner of their office.

If this sounds familiar, here are some actions you can take to avoid appearing like you are ignoring the input you have received and add more meaning to your training evaluation efforts:

1. Enter the data into an electronic repository to aggregate it so that objective decisions can be made. Make it public when program changes are made as a result of participant feedback.

2. If the program has multiple days or modules, provide a brief evaluation form at the end of each segment, and share the findings at the beginning of the next session. Explain to the participants how you will respond to their input throughout the rest of the program.

3. Incorporate high-level findings into program reports. Data related to the on-the-job application is good data to highlight.

Lesson #4: Mine Existing Data

Training professionals often say they are not able to gather data at Kirkpatrick Levels 3 and 4. They should do like Lila, and research existing data for relevant information. Here are some examples:

• Performance-related metrics being tracked by business units

• Customer service scores

• Employee engagement or satisfaction information

• Turnover or retention statistics

Your goal is to obtain access to pre-existing data and see if you can find a correlation between what people learned, how they are implementing what they learned on the job, and subsequent changes in results.

Additional Resources